Neural networks weights and bias help train the artificial neural network by adding a value to the neurons in the hidden layers.

Machines often learn the same way humans do — by making mistakes and paying the price for doing so. For example, when you’re first learning to drive, you merge onto the highway and are driving 55 mph in a 65 mph zone. Other drivers are beeping at you, passing you on the left and right, giving you dirty looks, and making rude gestures. You get the message and start driving the speed limit. Cars are still passing you on the left and right, and their drivers appear to be annoyed. You start driving 75 mph to blend in with the traffic. You are rewarded by feeling the excitement of driving faster and by reaching your destination more quickly. Soon, you are so comfortable driving 75 mph that you start driving 80 mph. One day, you hear a siren, and you see a state trooper’s car close behind you with a flashing red light. You get pulled over and issued a ticket for $200, so you slow it down and now routinely drive about 5 to 9 mph over the speed limit.

During this entire scenario, you learn through a process of trial and error by paying for your mistakes. You pay by being embarrassed for driving too slowly or you pay by getting pulled over and issued a warning or ticket or by getting into or causing an accident. You also learn by being rewarded, but since this article is about the cost function, I won’t get into that.

With machine learning, your goal is to make your machine as accurate as possible — whether the machine’s purpose is to make predictions, identify patterns in medical images, or drive a car. One way to improve accuracy in machine learning is to use a neural network cost function — a mathematical operation that compares the network’s output (the predicted answer) to the targeted output (the correct answer) to determine the accuracy of the machine.

In other words, the cost function tells the network how wrong it was, so the network can make adjustments to be less wrong (and more right) in the future. As a result, the network pays for its mistakes and learns by trial and error. The cost is higher when the network is making bad or sloppy classifications or predictions — typically early in its training phase.

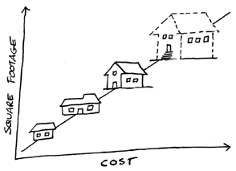

Machines learn different lessons depending on the model. In a simple linear regression model, the machine learns the relationship between an independent variable and a dependent variable; for example, the relationship between the size of a home and its cost. With linear regression, the relationship can be graphed as a straight line, as shown in the figure.

During the learning process, the machine can adjust the model in several ways. It can move the line up or down, left or right, or change the line’s slope, so that it more accurately represents the relationship between home size and square footage. The resulting model is what the machine learns. It can then use this model to predict the cost of a home when provided with the home’s size.

The cost function has one major limitation — it does not tell the machine what to adjust, by how much, or in which direction. It only indicates the accuracy of the output. For the machine to be able to make the necessary adjustments, the cost function must be combined with another function that provides the necessary guidance, such as gradient descent, which just happens to be the subject of my next post.

Neural networks weights and bias help train the artificial neural network by adding a value to the neurons in the hidden layers.

Artificial neural network clustering is often using unsupervised machine learning algorithms to find pattern recognition in your data set.

Artificial neural network regression and classification problems are usually solved using supervised machine learning.